SpeedyGA is a vectorized implementation of the Simple Genetic Algorithm in the Matlab programming language.

Matlab is optimized for performing operations on arrays. Loops, especially nested loops, tend to run slowly in Matlab. It is possible to significantly improve the performance of Matlab programs by converting loops into array operations. This process is called vectorization. Matlab provides a rich set of functions and many expressive indexing schemes that make it possible to vectorize code. Such code not only runs faster, it is also shorter, and simpler to understand and change (provided that you know a little about Matlab of course).

Genetic Algorithms that are implemented in C/C++ or Java typically have multiple nested loops. Therefore direct ports of such implementations to Matlab will run very slowly. Many of the nested loops found in a typical GA implementation have been eliminated from SpeedyGA. The resulting code is short, fast and simple. It is indeed a delightful coincidence when the constructs of a programming language match a programming task so well that a program can be written this succinctly.

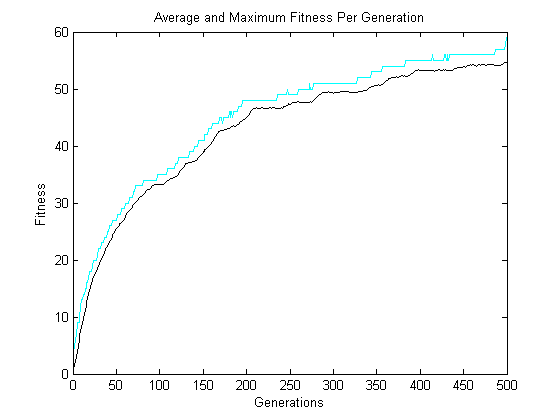

SpeedyGA is proof that Matlab is a useful language for the rapid prototyping of Genetic Algorithms. This, in addition to Matlab’s extensive data visualization capabilities, make Matlab an extremely useful platform for the experimental analysis of GAs.

SpeedyGA has been created and tested under Matlab 7 (R14). Out of the box it evolves a population against the one-max fitness function. The royal-roads fitness function has also been included but is not currently being called. If you find vectorGA useful or find any bugs please let me know.

Enjoy!

SpeedyGA is a revision of the old VectorGA. SpeedyGA has (not surprisingly) been further optimized for speed.

Changes since VectorGA

- Added mutation and crossover mask pregeneration

- Added the option to visualize the changing bit frequencies of a population (very handy for understanding GA dynamics)